Google Maps Restaurant Scraper – Complete Data Extraction Tool

The Problem That Started It All

Picture this: You’re a market researcher trying to analyze restaurant trends in New York City. You need data on 100+ restaurants—their ratings, prices, contact info, service options, and more.

Your options?

- Manual copy-paste: 5-10 minutes per restaurant = 8-16 hours of mind-numbing work

- Hire a VA: $200-500 for the project

- Use expensive data APIs: $100-500/month subscriptions

There had to be a better way.

That’s when I decided to build a Google Maps scraper that could extract comprehensive restaurant data automatically and export it to clean Excel files. What started as a weekend project turned into a powerful tool that saves hours of manual work.

What Makes This Scraper Different?

Most Google Maps scrapers out there are basic—they grab surface-level data like names and ratings. But real business intelligence requires depth.

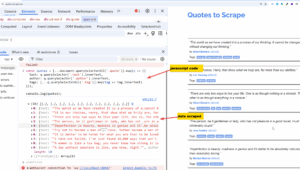

This scraper uses a two-stage extraction system:

Stage 1: The Wide Net

First, it casts a wide net across Google Maps search results:

- Scrolls through all available results (not just the first page)

- Collects basic info: names, ratings, review counts

- Most importantly: captures every restaurant’s unique URL

Stage 2: The Deep Dive

Then comes the magic. For each URL collected, the scraper:

- Visits the restaurant’s detailed Google Maps page

- Extracts 20+ data fields

- Handles missing information gracefully

- Compiles everything into a structured dataset

The result? A comprehensive Excel file with everything you need for analysis, outreach, or competitive research.

The Data Goldmine: 20+ Fields Extracted

Here’s what the scraper captures from each restaurant:

🎯 Core Metrics

- Restaurant name

- Star rating (1-5)

- Total review count

- Price range ($, $$, $$$, $$$$)

- Cuisine type

📞 Contact & Location

- Complete address

- Phone number

- Official website

- Google Plus Code

- Direct Google Maps URL

⏰ Operational Details

- Current status (Open/Closed)

- Hours of operation

- Service options (Dine-in, Takeout, Delivery)

🔗 Digital Presence

- Menu link

- Reservation link

- Recent review snippets

- Popular times availability

♿ Additional Information

- Accessibility features

- Full restaurant description

This isn’t just data—it’s actionable business intelligence.

Real-World Use Cases

1. Market Research & Competitive Analysis

A restaurant owner in Chicago used this scraper to analyze 200+ pizza places in the city. Within 30 minutes, they had:

- Average ratings by neighborhood

- Price distribution analysis

- Service options comparison

- Website adoption rates

Result: Identified an underserved neighborhood with high demand but few delivery options.

2. Lead Generation for B2B Services

A web design agency scraped 500 restaurants in their city and filtered for:

- Businesses with 4+ star ratings (successful, worth investing in marketing)

- Missing websites or outdated menu links

- Phone numbers for direct outreach

Result: Generated 150 qualified leads in one afternoon.

3. Investment & Location Analysis

A commercial real estate investor used the scraper to:

- Map restaurant density by neighborhood

- Identify cuisine gaps in emerging areas

- Analyze price points vs. location demographics

Result: Made data-driven decisions on property investments.

4. Academic Research

Urban planning researchers used the tool to study:

- Restaurant distribution patterns

- Correlation between ratings and price points

- Service option adoption rates post-pandemic

Result: Published findings on urban food accessibility.

Technical Deep Dive: How It Works

The Architecture

User Input (Search Query)

↓

Language Detection (13+ languages)

↓

Stage 1: Scroll & Collect URLs

↓

Stage 2: Visit Each URL

↓

Extract 20+ Data Fields

↓

Export to ExcelKey Technical Features

1. Smart Scrolling Algorithm

Google Maps loads results dynamically as you scroll. The scraper:

- Detects the scrollable container

- Scrolls incrementally

- Checks for new content after each scroll

- Stops when no new results appear (with 3-attempt verification)

2. Multi-Language Support

The scraper automatically detects location and sets the appropriate language:

- “restaurants in tokyo” → Japanese (ja-JP)

- “cafes in paris” → French (fr-FR)

- “pizza in new york” → English (en-US)

This ensures accurate data extraction regardless of location.

3. Robust Error Handling

Not all restaurants have complete information. The scraper:

- Uses try-catch blocks for each data field

- Records “N/A” for missing data instead of crashing

- Logs errors for debugging

- Continues scraping even if one listing fails

4. Anti-Detection Measures

To avoid being blocked:

- Disables automation flags

- Adds natural delays between requests (2-3 seconds)

- Mimics human browsing patterns

- Uses proper user agents

The Technology Stack

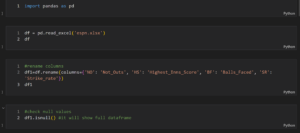

- Python 3.7+: Core programming language

- Selenium WebDriver: Browser automation

- Pandas: Data manipulation and Excel export

- ChromeDriver: Automated Chrome browsing

- OpenPyXL: Excel file creation

Installation & Setup (5 Minutes)

Getting started is straightforward:

# Step 1: Install Python packages

pip install selenium pandas openpyxl

# Step 2: That's it! Selenium now auto-manages ChromeDriverUsage Examples

Example 1: Basic Restaurant Scraping

scraper = GoogleMapsDetailedScraper()

try:

data = scraper.scrape_complete_data("restaurants in new york")

scraper.export_to_excel(data, 'nyc_restaurants.xlsx')

finally:

scraper.close()Output: Excel file with 50-100+ restaurants

Example 2: Specific Cuisine Research

scraper = GoogleMapsDetailedScraper()

try:

data = scraper.scrape_complete_data("italian restaurants in boston")

scraper.export_to_excel(data, 'boston_italian.xlsx')

finally:

scraper.close()Example 3: International Locations

scraper = GoogleMapsDetailedScraper()

try:

data = scraper.scrape_complete_data("sushi restaurants in tokyo")

scraper.export_to_excel(data, 'tokyo_sushi.xlsx')

finally:

scraper.close()The scraper automatically handles Japanese characters and formatting!

The full source code : https://mohammadtanvir.gumroad.com/l/GoogleMapsRestaurantDataScraper

Performance Metrics

Based on extensive testing:

- Speed: 3-5 seconds per restaurant (detailed page)

- Success Rate: 95%+ for active listings

- Recommended Batch Size: 50-100 restaurants per session

- Data Completeness: 18-20 fields on average (some restaurants have incomplete profiles)

Real Performance Example

Query: "pizza restaurants in chicago"

Results: 87 restaurants

Time: ~6 minutes total

- Stage 1 (scrolling): 1 minute

- Stage 2 (details): 5 minutes

Data Fields: Average 19/20 per restaurantEthical Considerations & Best Practices

✅ Recommended Uses

- Personal research and analysis

- Academic studies

- Business intelligence for your own company

- Market research

- Location analysis

⚠️ Important Guidelines

- Respect Rate Limits: Don’t scrape thousands of listings at once

- Use Reasonable Delays: Keep 2-3 second delays between requests

- Follow Terms of Service: Review Google’s terms before large-scale scraping

- Respect Privacy: Don’t share personal data extracted

- Add Value: Use data for analysis, not raw resale

Legal Considerations

Web scraping exists in a legal gray area. Best practices:

- Use data for personal/internal business purposes

- Don’t republish raw scraped data commercially

- Respect robots.txt files

- Follow data protection regulations (GDPR, CCPA)

- When in doubt, consult legal counsel

Common Challenges & Solutions

Challenge 1: “Elements Not Found” Errors

Cause: Google Maps updates their HTML structure

Solution: The scraper uses multiple CSS selectors and fallback methods

Challenge 2: Slow Performance

Cause: Network latency or too many requests

Solution: Adjust time.sleep() values, or run during off-peak hours

Challenge 3: Incomplete Data

Cause: Some restaurants have minimal Google Maps profiles

Solution: Scraper handles this gracefully with “N/A” values

Challenge 4: Language Mismatches

Cause: Query location doesn’t match expected language

Solution: Manual language override option available in code

Future Enhancements & Roadmap

The scraper is functional, but there’s room for expansion:

🔮 Planned Features

- [ ] Review Sentiment Analysis: AI-powered review analysis

- [ ] Image Downloading: Save restaurant photos

- [ ] Menu Item Extraction: Parse menu items and prices

- [ ] Historical Tracking: Monitor rating changes over time

- [ ] CSV Export: Alternative to Excel

- [ ] GUI Interface: User-friendly desktop app

- [ ] Proxy Rotation: For large-scale scraping

- [ ] Multi-threading: Parallel scraping for speed

The Business Opportunity

This scraper represents more than just a technical project—it’s a business automation tool that:

- Saves Time: Hours of manual work → Minutes of automated scraping

- Enables Data-Driven Decisions: Comprehensive datasets for analysis

- Creates Opportunities: Lead generation, market research, competitive intelligence

- Scales: Works for any location and cuisine type

Whether you’re a:

- 📊 Market researcher

- 🍕 Restaurant owner

- 💼 Business consultant

- 🎓 Academic researcher

- 💻 Data analyst

- 🚀 Entrepreneur

This tool provides instant access to structured business data.

Getting Started Today

The complete Google Maps scraper is available with:

- ✅ Full Python source code

- ✅ Comprehensive README documentation

- ✅ Example usage scripts

- ✅ Troubleshooting guide

- ✅ Excel export functionality

No expensive subscriptions. No API limits. No manual data entry.

Just clean, automated data extraction that works out of the box.

Final Thoughts

Building this scraper taught me valuable lessons about web automation, data extraction, and creating tools that solve real problems. What started as a personal productivity hack has become a robust solution for anyone who needs Google Maps data.

The power of automation isn’t just about saving time—it’s about unlocking insights that would be impossible to gather manually. When you can analyze hundreds of businesses in minutes instead of days, you can make better decisions, identify opportunities faster, and stay ahead of the competition.

FAQs

Q: Is web scraping legal?

A: Web scraping for personal research and analysis is generally accepted. Always review terms of service and consult legal counsel for commercial use.

Q: Will Google block me?

A: The scraper includes rate limiting and anti-detection measures. Follow best practices (reasonable delays, batch limits) to minimize risk.

Q: Can I scrape other businesses besides restaurants?

A: Yes! The scraper works for any Google Maps category: hotels, gyms, salons, retail stores, etc.

Q: How often can I run the scraper?

A: For personal use, once or twice daily is reasonable. For commercial use, consider official Google Places API.

Q: What if Google Maps changes its layout?

A: The scraper may need CSS selector updates. The code is well-commented for easy modifications.

Q: Can I customize what data is extracted?

A: Absolutely! The code is modular and easy to extend with additional fields.

Ready to automate your data collection? Stop spending hours on manual research and start making data-driven decisions today.

Have questions or want to share how you’re using the scraper? Drop a comment below!

Tags: #WebScraping #Python #GoogleMaps #DataExtraction #Automation #BusinessIntelligence #MarketResearch #Selenium #DataAnalysis #ProductivityTools